Comprehension Debt - the hidden cost of AI generated code.

March 14, 2026

Comprehension debt is the hidden cost to human intelligence and memory resulting from excessive reliance on AI and automation. For engineers, it applies most to agentic engineering.

There’s a cost that doesn’t show up in your velocity metrics when teams go deep on AI coding tools. Especially when its tedious to review all the code the AI generates. This cost accumulates steadily, and eventually it has to be paid - with interest. It’s called comprehension debt or cognitive debt.

Comprehension debt is the growing gap between how much code exists in your system and how much of it any human being genuinely understands.

Unlike technical debt, which announces itself through mounting friction - slow builds, tangled dependencies, the creeping dread every time you touch that one module - comprehension debt breeds false confidence. The codebase looks clean. The tests are green. The reckoning arrives quietly, usually at the worst possible moment.

Margaret-Anne Storey’s describes a student team that hit this wall in week seven: they could no longer make simple changes without breaking something unexpected. The real problem wasn’t messy code. It was that no one on the team could explain why design decisions had been made or how different parts of the system were supposed to work together. The theory of the system had evaporated.

That’s comprehension debt compounding in real time.

I’ve read Hacker News threads that captured engineers genuinely wrestling with the structural version of this problem - not the familiar optimism versus skepticism binary, but a field trying to figure out what rigor actually looks like when the bottleneck has moved.

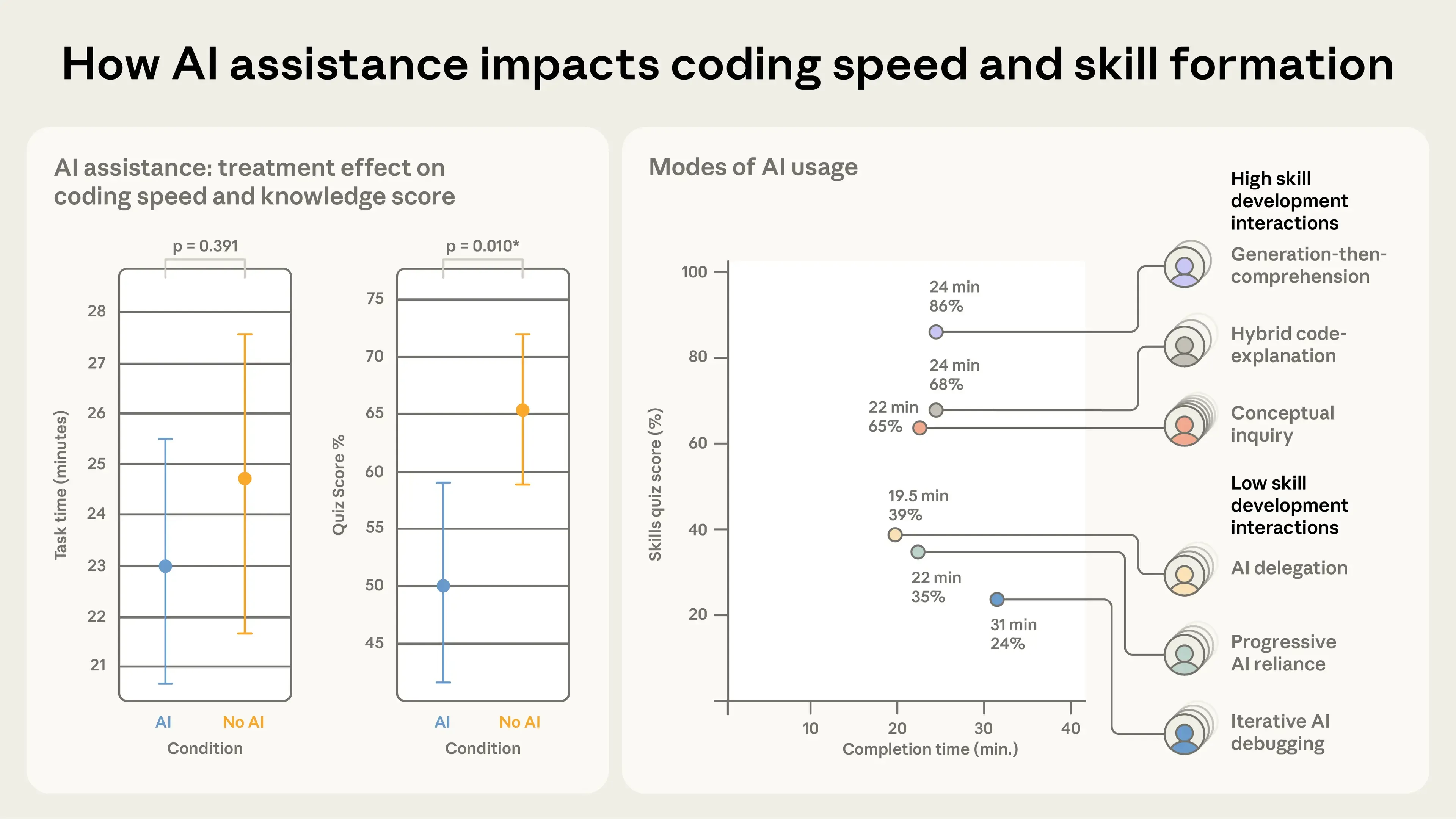

A recent Anthropic study titled “How AI Impacts Skill Formation” highlighted the potential downsides of over-reliance on AI coding assistants. In a randomized controlled trial with 52 software engineers learning a newlibrary, participants who used AI assistance completed the task in roughly the same time as the control group but scored 17% lower on a follow-up comprehension quiz (50% vs. 67%). The largest declines occurred in debugging, with smaller but still significant drops in conceptual understanding and code reading. The researchers emphasize that passive delegation (“just make it work”) impairs skill development far more than active, question-driven use of AI. The full paper is available arXiv: https://arxiv.org/abs/2601.20245.

There is a speed asymmetry problem here

AI generates code far faster than humans can evaluate it. That sounds obvious, but the implications are easy to underestimate.

When a developer on your team writes code, the human review process has always been a bottleneck - but a productive and educational one. Reading their PR forces comprehension. It surfaces hidden assumptions, catches design decisions that conflict with how the system was architected six months ago, and distributes knowledge about what the codebase actually does across the people responsible for maintaining it.

AI-generated code breaks that feedback loop. The volume is too high. The output is syntactically clean, often well-formatted, superficially correct - precisely the signals that historically triggered merge confidence. But surface correctness is not systemic correctness. The codebase looks healthy while comprehension quietly hollows out underneath it.

I read one engineer say that the bottleneck has always been a competent developer understanding the project. AI doesn’t change that constraint. It creates the illusion you’ve escaped it.

And the inversion is sharper than it looks. When code was expensive to produce, senior engineers could review faster than junior engineers could write. AI flips this: a junior engineer can now generate code faster than a senior engineer can critically audit it. The rate-limiting factor that kept review meaningful has been removed. What used to be a quality gate is now a throughput problem.

I love tests, but they aren’t a complete answer

The instinct to lean harder on deterministic verification - unit tests, integration tests, static analysis, linters, formatters - is understandable. I do this a lot in projects heavily leaning on AI coding agents. Automate your way out of the review bottleneck. Let machines check machines.

This helps. It has a hard ceiling.

A test suite capable of covering all observable behavior would, in many cases, be more complex than the code it validates. Complexity you can’t reason about doesn’t provide safety though. And beneath that is a more fundamental problem: you can’t write a test for behavior you haven’t thought to specify.

Nobody writes a test asserting that dragged items shouldn’t turn completely transparent. Of course they didn’t. That possibility never occurred to them. That’s exactly the class of failure that slips through, not because the test suite was poorly written, but because no one thought to look there.

There’s also a specific failure mode worth naming. When an AI changes implementation behavior and updates hundreds of test cases to match the new behavior, the question shifts from “is this code correct?” to “were all those test changes necessary, and do I have enough coverage to catch what I’m not thinking about?” Tests cannot answer that question. Only comprehension can.

The data is starting to back this up. Research suggests that developers using AI for code generation delegation score below 40% on comprehension tests, while developers using AI for conceptual inquiry - asking questions, exploring tradeoffs - score above 65%. The tool doesn’t destroy understanding. How you use it does.

Tests are necessary. They are not sufficient.

Lean on specs, but they’re also not the full story.

A common proposed solution: write a detailed natural language spec first. Include it in the PR. Review the spec, not the code. Trust that the AI faithfully translated intent into implementation.

This is appealing in the same way Waterfall methodology was once appealing. Rigorously define the problem first, then execute. Clean separation of concerns.

The problem is that translating a spec to working code involves an enormous number of implicit decisions - edge cases, data structures, error handling, performance tradeoffs, interaction patterns - that no spec ever fully captures. Two engineers implementing the same spec will produce systems with many observable behavioral differences. Neither implementation is wrong. They’re just different. And many of those differences will eventually matter to users in ways nobody anticipated.

There’s another possibility with detailed specs worth calling out: a spec detailed enough to fully describe a program is more or less the program, just written in a non-executable language. The organizational cost of writing specs thorough enough to substitute for review may well exceed the productivity gains from using AI to execute them. And you still haven’t reviewed what was actually produced.

The deeper issue is that there is often no correct spec. Requirements emerge through building. Edge cases reveal themselves through use. The assumption that you can fully specify a non-trivial system before building it has been tested repeatedly and found wanting. AI doesn’t change this. It just adds a new layer of implicit decisions made without human deliberation.

Learn from history

Decades of managing software quality across distributed teams with varying context and communication bandwidth has produced real, tested practices. Those don’t evaporate because the team member is now a model.

What changes with AI is cost (dramatically lower), speed (dramatically higher), and interpersonal management overhead (essentially zero). What doesn’t change is the need for someone with deep system context to maintain coherent understanding of what the codebase is actually doing and why.

This is the uncomfortable redistribution that comprehension debt forces.

As AI volume goes up, the engineer who truly understands the system becomes more valuable, not less. The ability to look at a diff and immediately know which behaviors are load-bearing. To remember why that architectural decision got made under pressure eight months ago.

To tell the difference between a refactor that’s safe and one that’s quietly shifting something users depend on. That skill becomes the scarce resource the whole system depends on.

There’s a bit of a measurement gap here too

The reason comprehension debt is so dangerous is that nothing in your current measurement system captures it.

Velocity metrics look immaculate. DORA metrics hold steady. PR counts are up. Code coverage is green.

Performance calibration committees see velocity improvements. They cannot see comprehension deficits, because no artifact of how organizations measure output captures that dimension. The incentive structure optimizes correctly for what it measures. What it measures no longer captures what matters.

This is what makes comprehension debt more insidious than technical debt. Technical debt is usually a conscious tradeoff - you chose the shortcut, you know roughly where it lives, you can schedule the paydown. Comprehension debt accumulates invisibly, often without anyone making a deliberate decision to let it. It’s the aggregate of hundreds of reviews where the code looked fine and the tests were passing and there was another PR in the queue.

The organizational assumption that reviewed code is understood code no longer holds. Engineers approved code they didn’t fully understand, which now carries implicit endorsement. The liability has been distributed without anyone noticing.

The regulation horizon is closer than it looks

Every industry that moved too fast eventually attracted regulation. Tech has been unusually insulated from that dynamic, partly because software failures are often recoverable, and partly because the industry has moved faster than regulators could follow.

That window is closing. When AI-generated code is running in healthcare systems, financial infrastructure, and government services, “the AI wrote it and we didn’t fully review it” will not hold up in a post-incident report when lives or significant assets are at stake.

Teams building comprehension discipline now - treating genuine understanding, not just passing tests, as non-negotiable - will be better positioned when that reckoning arrives than teams that optimized purely for merge velocity.

What comprehension debt actually demands

The right question for now isn’t “how do we generate more code?” It’s “how do we actually understand more of what we’re shipping?” so we can make sure our users get a consistently high quality experience.

That reframe has practical consequences. It means being ruthlessly explicit about what a change is supposed to do before it’s written. It means treating verification not as an afterthought but as a structural constraint. It means maintaining the system-level mental model that lets you catch AI mistakes at architectural scale rather than line-by-line. And it means being honest about the difference between “the tests passed” and “I understand what this does and why.”

Making code cheap to generate doesn’t make understanding cheap to skip. The comprehension work is the job.

AI handles the translation. But someone still has to understand what was produced, why it was produced that way, and whether those implicit decisions were the right ones - or you’re just deferring a bill that will eventually come due in full.

You will pay for comprehension sooner or later. The debt accrues interest rapidly.